Standard GEO reporting tells you how many AI citations you earned. Advanced GEO analytics tells you why — and that distinction is where most teams leave significant optimisation potential on the table. When a page stops earning Perplexity citations after three months of strong performance, standard reporting shows a drop. Advanced analytics shows whether the cause was a RAG retrieval score decline, a competitor publishing fresher structured content, or a query pattern shift that your existing content no longer matches. The difference between those diagnoses is the difference between a content refresh that works and one that misses the actual problem entirely.

What RAG Means for GEO Performance — and Why Most Teams Ignore It

RAG — Retrieval-Augmented Generation — is the technical process that determines which pages AI systems pull before generating a response. Understanding it changes how you diagnose GEO performance gaps.

When a user types a question into Perplexity or triggers a Google AI Overview, the system does not generate an answer from its training data alone. It first retrieves relevant content from its index — pulling the pages most likely to contain accurate, citable answers. That retrieval step is RAG. The generation step is what the user sees. But the retrieval step determines whose content gets used.

Most GEO teams focus on the output — citation counts, impression numbers, referral traffic. Almost none analyse the retrieval step that produces those outputs. That is the gap. If your content is not being retrieved, it will never be cited — regardless of how well it is written or how clean your schema is.

RAG retrieval is not random. It scores content on a set of signals and pulls the highest-scoring candidates into the generation process. Understanding those signals is what separates advanced GEO analytics from basic performance reporting.

For teams building the foundational measurement layer before moving to advanced analytics, our GEO metrics dashboard guide covers the core dashboard setup that feeds the data advanced analytics depends on.

💡 Pro-Tip: Run the same query in Perplexity on five consecutive days and note which sources appear each time. RAG retrieval is not perfectly deterministic — the same query produces slightly different source sets across sessions. Pages that appear consistently across all five sessions are high-confidence retrieval assets. Pages that appear once or twice are marginal candidates. That consistency pattern tells you more about retrieval strength than any single citation check.

The Signals RAG Systems Use to Select Content for Citation

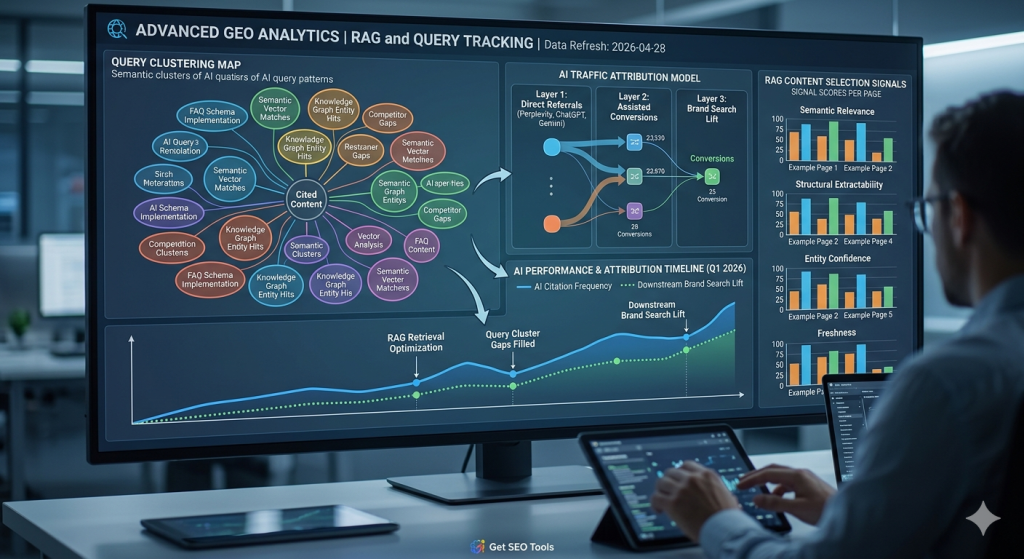

RAG systems score content on four primary signals: semantic relevance, structural extractability, entity confidence, and freshness. Each signal operates independently — and weakness in any one reduces retrieval probability even when the others are strong.

Semantic relevance is the primary gate. The content must match the query intent at the concept level — not just the keyword level. A page on “how to implement FAQ schema for AI citations” matches a query about structured data for AI visibility. A page on “schema markup best practices” is topically adjacent but semantically less precise. RAG systems apply vector similarity scoring to measure this match. Content that scores high on semantic relevance gets pulled into consideration. Content that scores lower gets filtered out before generation begins.

Structural extractability determines whether the retrieved content can be cleanly used. A page with a direct answer in the first paragraph, FAQ schema on the relevant questions, and self-contained paragraphs scores high. A page with the same information buried in flowing prose with no structural markers scores low — even if the semantic relevance is identical. The RAG system needs content it can extract without reconstruction.

Entity confidence reflects how well the author and publisher are verified in the AI system’s knowledge graph. Pages from entities with confirmed Person schema, verified sameAs links, and consistent sitewide entity declarations score higher on this signal. Gemini weights entity confidence heavily — it applies E-E-A-T evaluation as part of its retrieval scoring before generation begins.

Freshness is the fourth signal. RAG systems prefer recently updated content for queries where recency matters — current tool comparisons, updated statistics, recent methodology changes. A page last updated 18 months ago competes at a disadvantage against a fresher page on the same topic, even if the original content was stronger. Regular content refreshes maintain freshness scores without requiring full rewrites.

These four signals map directly to the GEO optimisation layers covered across this content cluster. Semantic relevance connects to long-tail content strategy. Structural extractability connects to schema and content format. Entity confidence connects to Person and Organization schema. Freshness connects to content update cadence. For the complete picture of how these signals interact in a unified GEO strategy, our GEO long-tail keyword strategy guide covers the cross-platform citation framework that ties all four signals together.

Query Clustering: Finding the AI Prompt Patterns That Drive Your Citations

Query clustering groups the prompt patterns that trigger AI citations for your content into thematic clusters — revealing which topic areas you dominate and which you are missing entirely.

Standard GEO reporting shows you individual query data. A page earned citations on “how to add FAQ schema” and “FAQ schema JSON-LD example.” That is two data points. Query clustering groups those two queries with ten similar ones — “best way to write FAQ schema,” “FAQ schema for WordPress,” “does FAQ schema still work” — into a single topic cluster: FAQ schema implementation.

That cluster view changes your optimisation logic completely. Instead of optimising for individual queries, you optimise for the cluster. A single well-structured page with comprehensive FAQ coverage on the cluster topic can earn citations across all twelve related queries simultaneously. A series of thin pages targeting individual keywords earns one citation per page at best.

The practical process for query clustering uses your GSC AI Overview query data as the input. Export your last 90 days of AI Overview queries. Group them by semantic similarity — manually for smaller data sets, or using a spreadsheet with VLOOKUP clustering for larger ones. Each group becomes a topic cluster. Clusters where you have strong citation presence identify your GEO strengths. Clusters where you have zero or minimal citation data identify your highest-priority content gaps .

The gap clusters are the most valuable output. They show you exactly which topic areas your competitors are earning citation share on — without you. Each gap cluster is a content brief. A page targeting that cluster with correct structure and schema can enter the citation pool within weeks of publication.

According to Semrush’s 2025 content gap research, sites that identified and filled AI query cluster gaps showed 3.1 times higher citation growth rates over 6 months compared to sites that optimised existing pages without addressing gap clusters. The new content fills retrieval slots that current content cannot reach regardless of how well it is optimised.

💡 Pro-Tip: When building query clusters from GSC data, include the queries where you have zero AI Overview impressions — not just the ones where you appear. Export your full organic query list and identify which query clusters have zero AI impressions alongside their organic performance. A cluster generating organic clicks but no AI impressions is a clear structural signal — the content exists and ranks, but is not structured for AI extraction. That is a schema and format fix, not a content creation task.

AI Performance Measurement: Attribution Models That Actually Work

AI-attributed traffic requires a different attribution model than organic SEO — because the citation-to-click path involves multiple touchpoints that standard last-click attribution misses.

The standard last-click attribution model credits the final click before conversion. For AI-driven traffic, this model consistently undercounts GEO’s contribution. A user discovers your brand through a Perplexity citation, does not click through, then searches your brand name on Google two days later and converts through an organic click. Last-click attribution credits organic search. The Perplexity citation that created the brand awareness gets no credit.

A more accurate attribution model for AI-driven traffic uses a three-layer approach. Layer one tracks direct AI referral conversions — sessions from perplexity.ai, chat.openai.com, and gemini.google.com that convert in the same session. Layer two tracks brand search lift — increases in branded organic search volume that correlate with periods of high AI citation activity. Layer three tracks assisted conversions — conversion paths in GA4 where an AI referral visit appears in the path even if it was not the final touchpoint.

Brand search lift is the most underestimated layer. When Perplexity or ChatGPT cites your brand repeatedly across different queries, users who see those citations but do not click immediately often search your brand name later. That branded search volume increase is a direct downstream effect of AI citation activity — measurable in GSC by filtering for your brand name queries and overlaying the timeline against your AI impression data.

For teams connecting this attribution data to the knowledge graph signals that drive entity recognition, our guide on GEO knowledge graph and entity mapping covers how entity relationships in structured data feed directly into RAG retrieval scoring and brand recognition across AI platforms.

Advanced GEO Analytics vs Standard GEO Reporting

| Dimension | Standard GEO Reporting | Advanced GEO Analytics |

|---|---|---|

| Primary data source | GSC AI Overview impressions + GA4 referral traffic | GSC + GA4 + manual citation data + RAG signal analysis |

| Query analysis | Individual query performance | Query cluster analysis — topic area coverage and gaps |

| Attribution model | Last-click or session-level | Three-layer: direct AI referral + brand search lift + assisted conversions |

| Competitive analysis | Manual citation checks for competitor presence | Systematic cluster-level competitor citation mapping |

| Diagnostic capability | Identifies performance changes (up or down) | Identifies why performance changed — RAG signal, competitor, freshness, or structural gap |

| Action output | Content to refresh or schema to fix | Specific RAG signal to improve per page + gap clusters to fill + attribution shift to investigate |

| Time to insight | Available monthly | Requires 90-day data baseline before cluster patterns are reliable |

Putting It Together: The Advanced GEO Analytics Workflow

Advanced GEO analytics runs on a monthly cycle — not a daily one — because the patterns it identifies require 90 days of baseline data to be meaningful.

Month one through three is the baseline period. During this phase, collect GSC AI Overview query data, manual citation check results across Perplexity, ChatGPT, and Gemini, and GA4 referral traffic from AI sources. Do not make optimisation decisions based on individual data points. Build the cluster map. Establish the attribution baseline. Identify your top ten citation-earning pages and your five highest-priority gap clusters.

Month four onwards is the optimisation cycle. Each month, review the cluster map for three changes: new gap clusters that appeared in your GSC data, existing clusters where citation share shifted, and pages where RAG signals — structural extractability or freshness — declined. Each finding maps to a specific action. New gap cluster means create targeted content. Citation share shift means run a content refresh or schema audit on the affected pages. RAG signal decline means update the opening paragraph structure and check schema validity.

The attribution model review runs quarterly. Pull brand search volume trends from GSC branded query data. Compare against periods of high and low AI impression activity. The correlation tells you how much of your branded organic traffic is downstream of AI citation activity — which is the true measure of GEO’s contribution to your overall search performance.

For teams connecting advanced analytics back to the citation benchmarks that tell you whether your performance is strong or weak relative to your niche, our AI citation benchmarks guide covers the niche-level reference data that makes advanced analytics findings interpretable rather than just descriptive.

According to BrightEdge’s 2025 measurement research, teams running advanced GEO analytics with RAG signal analysis and query clustering identified content optimisation opportunities 8 weeks earlier on average than teams using impression-only reporting. That 8-week advantage compounds significantly on competitive topics where citation slots shift frequently.

Frequently Asked Questions

What is RAG and how does it affect GEO performance?

RAG stands for Retrieval-Augmented Generation. It is the process AI systems use to retrieve relevant content from an index before generating a response. Content that scores highest on semantic relevance, structural clarity, and entity confidence is retrieved most frequently — and retrieved content becomes cited content.

What is AI query clustering in GEO analytics?

AI query clustering groups the prompt patterns that trigger citations for your content into thematic clusters. Each cluster reveals a topic area where your content is — or is not — earning citation share. Clustering helps identify content gaps at the query pattern level rather than the keyword level, which is more actionable for GEO optimisation.

How do you attribute traffic to AI citations specifically?

Track referral traffic from perplexity.ai, chat.openai.com, and gemini.google.com in Google Analytics 4. For Google AI Overviews, filter Google Search Console data by search type: AI Overviews. Combine both data sources in a Looker Studio dashboard to get a unified view of AI-attributed sessions, engagement, and conversions.

What signals does a RAG system use to select content?

RAG systems score content on semantic relevance to the query, structural extractability, entity confidence, and freshness. Semantic relevance is the primary signal. Structural extractability determines whether the content can be cleanly pulled without context dependence. Entity confidence reflects how well the author and publisher are verified.

How is advanced GEO analytics different from standard GEO reporting?

Standard GEO reporting tracks impression and click counts. Advanced GEO analytics connects those counts to the RAG retrieval signals that caused them — query clustering, entity confidence scoring, content structure performance, and attribution modelling across multiple AI platforms simultaneously. It answers not just how many citations, but why specific pages earn them and others do not.

Key Takeaways

- RAG retrieval determines which pages get cited — understanding the four retrieval signals (semantic relevance, structural extractability, entity confidence, freshness) is the foundation of advanced GEO analytics.

- Query clustering reveals gap clusters — topic areas where competitors earn citation share and your content does not appear. Each gap cluster is a content brief with confirmed citation demand.

- Last-click attribution undercounts GEO’s contribution — a three-layer model covering direct AI referrals, brand search lift, and assisted conversions gives a more accurate picture of AI citation value.

- Brand search lift is the most underestimated GEO metric — increased branded search volume that correlates with high AI citation activity reveals the downstream awareness value of citations that never produce direct clicks.

- Sites using query clustering found content opportunities 8 weeks earlier than teams using impression-only reporting — giving them a significant head start on filling citation gaps before competitors.

- Advanced GEO analytics requires a 90-day baseline — individual data points are not meaningful until cluster patterns emerge from 3 months of consistent data collection.

- The monthly optimisation cycle has three actions: fill new gap clusters with targeted content, refresh pages where citation share shifted, and fix RAG signals on pages where structural or freshness scores declined.