Internal linking architecture is the structural system that determines how PageRank distributes across a domain, how deep Googlebot reaches into the URL graph per crawl cycle, and how topical authority accumulates in cluster hubs. Most teams treat internal linking as an editorial task — a few contextual references added at publication time. At enterprise scale, that approach produces authority silos, orphaned URL populations, and crawl depth failures that compound quietly for months before surfacing in ranking data.

This guide builds the complete internal linking architecture system: graph topology selection, authority flow modeling, hub design, anchor text governance, crawl depth engineering, and deployment validation. The upstream structural decisions this framework depends on — URL generation, template design, crawl path control — are defined in the technical SEO architecture framework. Internal linking operates on top of that structural layer, not independently of it.

What Internal Linking Architecture Controls at the System Level

Internal links perform three functions simultaneously: they define the crawl graph Googlebot traverses, they distribute PageRank across the URL set, and they communicate topical relationships between documents. From Google’s perspective, a site’s internal link graph is a directed weighted graph — each link is an edge, each URL is a node, and edge weight reflects the authority of the source page combined with the signal strength of anchor context and link position.

Architectural failures manifest as measurable, traceable problems. Pages with strong topical relevance receive insufficient crawl frequency because they sit at depth five in the link graph. Cluster hubs accumulate inbound equity from supporting articles but distribute nothing outward. Commercial pages receive equity from editorial content through anchors so generic — “learn more,” “read this” — that no topical relevance signal transfers.

Google’s Search documentation confirms that internal links help Googlebot understand site structure and content relationships. The operational implication is that internal linking architecture functions as a direct ranking system variable. Consequently, implementation must happen at the template and governance layer — page-level decisions cannot produce system-level outcomes at scale. For the complete internal linking tool, see our Internal Linking Tool.

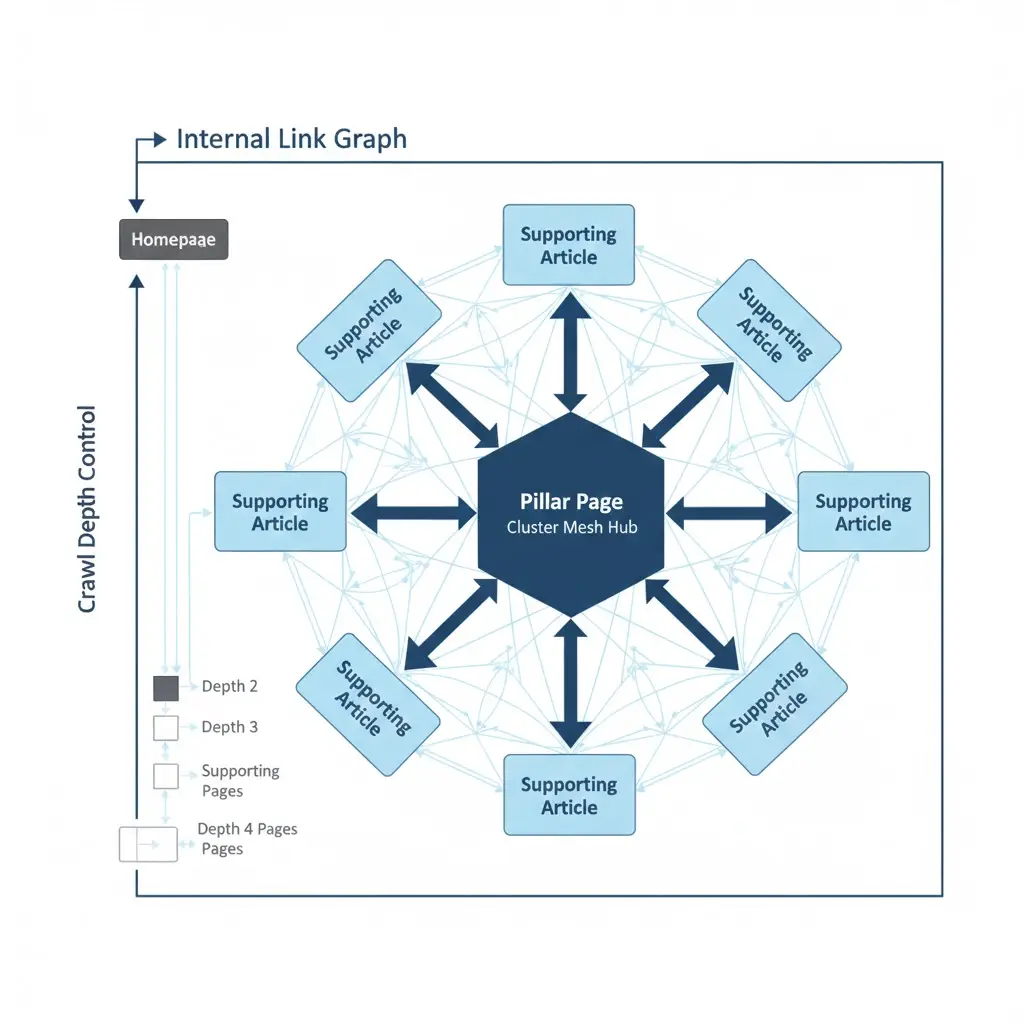

Graph Topology Selection

The link graph topology determines where authority concentrates and how it flows across the domain. Three primary models define the design space.

Hub-and-Spoke Topology

In hub-and-spoke, a central pillar page links to all supporting articles within its cluster. Supporting articles link back to the hub but not to each other. Authority concentrates at the hub; supporting pages receive hub equity through the return link but do not share equity laterally with peer articles.

This model is well-suited to topical authority concentration strategies where the objective is to maximize a single pillar page’s ranking signal for a competitive head keyword. The structural trade-off: cross-cluster topical relationships are absent from the link graph, which limits the domain’s ability to signal broad topical expertise beyond the hub keyword set.

Distributed Mesh Topology

In a distributed mesh, supporting articles link to the hub, to each other, and to adjacent cluster hubs. Authority distributes across the entire cluster set rather than concentrating at a single node. Google can model the full conceptual territory of the cluster through the link graph structure alone — richer topical signal, broader authority distribution.

However, mesh topologies require strict anchor text governance. When every supporting article links to every other with varied anchors, topical signal per link weakens relative to a tighter hub-and-spoke model. Unmanaged mesh implementations at scale produce noisy link graphs that reduce topical authority precision.

Hybrid Cluster Topology

The hybrid model combines hub concentration with lateral mesh linking within defined cluster boundaries. Supporting articles link to the hub and to topically adjacent supporting articles within the same cluster. Cross-cluster links connect hub-to-hub only. This preserves the authority concentration benefit of hub-and-spoke while enabling the topical breadth signaling of mesh linking within each cluster.

For most enterprise content operations, the hybrid cluster model produces the best combination of authority distribution efficiency and topical signal clarity. The operational requirement is a topical map defining which articles belong to which cluster and which cross-cluster hub connections are permitted — without this map, the hybrid model degrades into unstructured mesh over time. Use our Topic Cluster Tool to build and manage your topical map systematically.

Internal Linking Architecture — Crawl Depth Engineering

Crawl depth is the most structurally significant crawl frequency determinant in internal linking architecture. Pages at depth two receive dramatically more frequent Googlebot visits than pages at depth five. The relationship is not linear — each additional click of depth reduces crawl frequency disproportionately, particularly on sites where non-canonical URL types are consuming significant crawl budget.

Optimal Depth Targets by Page Type

The depth ceiling model defines maximum acceptable click depth by page value tier:

Primary commercial pages (top-revenue categories, flagship products, service pages): depth 2 maximum from the root.

Supporting commercial pages (subcategories, product variants, supporting service pages): depth 3 maximum.

Editorial and cluster content (blog posts, guides, supporting articles): depth 3–4 maximum.

Any indexable, revenue-relevant page beyond depth 4 is an architectural failure requiring link injection, not content optimization. The technical SEO crawl budget optimization framework defines how depth reduction interacts with crawl allocation — shorter crawl paths to canonical content directly increase refresh frequency for those pages.

Depth Flattening Without URL Restructuring

Reducing click depth for existing deep pages does not require URL restructuring. It requires link topology modification: adding contextual links to deep pages from hub pages at depth one or two. A single link from a depth-two hub page reduces the effective depth of the target to depth three. Multiple links from depth-one and depth-two pages create multiple short crawl paths, amplifying crawl priority.

Detection requires an internal link graph export. Use Screaming Frog or Sitebulb to export the internal link graph and calculate the shortest path from the homepage to every canonical URL. Pages with shortest path length greater than four are depth remediation targets. Prioritize by commercial value — depth five pages with high organic revenue contribution require link injection before depth five pages with minimal traffic.

Authority Flow Modeling — PageRank Distribution Analysis

PageRank flows through internal links at every crawl cycle. The distribution is not equal — pages with more inbound internal links from high-authority sources accumulate more equity. Without modeling the flow explicitly, internal link decisions are made without understanding their quantitative impact on authority distribution.

Building an Internal Authority Flow Model

The practical approach to authority flow modeling uses relative link counts as a proxy for PageRank distribution. Export the internal link graph. For each URL, calculate: total inbound internal links, number of inbound links from hub-tier pages, number of inbound links from high-traffic editorial pages, and total outbound internal links. The inbound-to-outbound ratio per page indicates whether the page is a net authority receiver or a net authority distributor.

Hub pages should be net receivers — they accumulate inbound equity from many sources. Commercial pages should be net receivers relative to their tier. Editorial content pages should be both receivers (from hub links) and distributors (linking to commercial targets and other editorial pages).

Pages that are pure distributors — many outbound links, few or no inbound links — are authority sinks. They distribute equity they do not themselves hold. Adding inbound links to these pages from hub or editorial content converts them from sinks to active nodes in the authority distribution network.

Equity Leak Detection

Equity leaks occur when high-value pages link to low-value destinations that do not return equity through a reciprocal or onward link. Common leak patterns: footer links to tag archives and author pages that are noindexed and provide no ranking value; header navigation links to utility pages (login, cart, account) that carry no topical relevance; contextual links within high-authority pillar content pointing to external domains without strategic justification.

Detection requires auditing outbound internal links from the top 20% of authority-holding pages on the domain. For each outbound link from a high-authority source, evaluate: does the destination page pass equity onward to a commercial or cluster page? Does the anchor provide topical signal? Is the destination indexed and ranking? Links from authority-holding pages to noindexed, thin, or off-topic destinations represent recoverable equity that should be redirected through link target changes or anchor text updates.

Anchor Text Governance Framework

Anchor text is the topical signal mechanism of each internal link. Generic anchors communicate navigation intent to users but provide no keyword relevance signal to crawlers. At scale, anchor text inconsistency across thousands of internal links produces ambiguous topical signals that force Google to resolve keyword-to-page mappings independently — often not in alignment with business intent.

Anchor Classification System

A functional anchor governance framework classifies every internal link anchor into one of four categories:

| Anchor Type | Example | Topical Signal | Permitted Use |

|---|---|---|---|

| Exact match | technical SEO audit | High — directly maps to destination keyphrase | 1–2 uses per destination URL; primary topical signal link |

| Partial match | run a full SEO audit | Medium — contextually relevant, less precise | Most common anchor type; safe for repeated use |

| Branded / URL | GetSEO Tools, getseo.tools/audit | Low for topical; high for brand authority | Navigation and brand-building contexts; not for topical linking |

| Generic | click here, read more, learn more | None | Avoid entirely in contextual internal linking; acceptable only in nav UI elements |

The operational rule: every contextual internal link in editorial content must use a partial match or exact match anchor that names the destination’s primary concept. Generic anchors in contextual positions are an architectural failure, not a style preference.

Anchor Consistency Enforcement

Anchor inconsistency at scale — where the same destination URL receives twenty different anchor texts across its inbound link set — dilutes the topical signal each link provides. The fix is an anchor target map: a document that specifies the approved primary anchor and two to three approved partial match variants for every cluster hub and commercial target page. Editorial teams reference this map at publication and update time. Any new internal link to a mapped destination uses only approved anchor variants.

Enforcement requires periodic crawl auditing. The technical SEO audit should include an anchor distribution report per target URL — identifying destinations receiving high generic anchor ratios and flagging them for remediation in the content update queue.

Orphan URL Detection and Prevention System

An orphaned URL is a crawlable, indexable page with no internal link pointing to it. Orphans exist outside the crawl graph — Googlebot cannot discover them through link traversal, they receive no equity from any source, and their crawl frequency depends entirely on sitemap inclusion or external link discovery. At any meaningful scale, orphan accumulation is the predictable consequence of publishing workflows that do not include a mandatory internal linking step.

Detection Protocol

Problem: orphaned indexable pages accumulate invisibly across the site, holding no authority and receiving minimal crawl attention regardless of content quality or topical relevance.

Cause: content publication workflows that treat internal linking as optional rather than mandatory. Template updates or site restructures that break existing internal link paths without creating replacements. CMS migrations that do not carry forward the internal link graph.

Detection: export all URLs from the XML sitemap. Export all URLs appearing in the internal link graph via a full site crawl. Export all URLs receiving Googlebot crawl visits in server log data over the past 30 days. The population of sitemap URLs appearing in none of the three link graph sources — internal links, log-confirmed crawl paths, redirect chains from known URLs — is the orphan candidate set. Cross-reference against GSC Coverage to identify which orphan candidates are actually indexed versus merely included in the sitemap.

Fix: every identified orphan requires at minimum one contextual internal link from a topically relevant indexed page. Prioritize orphan remediation by page value: commercial orphans before editorial orphans. Implement a publishing gate — no URL may be published without a confirmed internal link from at least one existing indexed page. Integrate this check into the technical SEO checklist as a pre-publish blocking condition.

JavaScript Rendering and Internal Link Discovery

Internal links rendered exclusively via JavaScript — navigation components, related content carousels, dynamically generated anchor tags — are not available to Googlebot at crawl time. They exist only in the rendered DOM, accessible only after the rendering queue processes the page. For link topology purposes, a JavaScript-only internal link does not contribute to crawl path efficiency or authority distribution at crawl frequency.

The failure pattern is common in React and Vue SPAs where navigation menus, related post widgets, and breadcrumb components are client-side rendered. The site appears fully linked in the browser, but the raw HTML response contains minimal or no internal link structure. Googlebot’s crawl-time link graph is correspondingly sparse.

Detection: fetch the target URL via curl and extract all anchor tags from the raw response. Compare against the full anchor set visible in Chrome DevTools’ rendered DOM. Any anchor present in the DOM but absent from the raw HTML response is JavaScript-rendered and unavailable at crawl time. For technical SEO implementation purposes, this is classified as a rendering failure with direct internal link graph consequences — not a UX issue. See our guide on technical SEO rendering architecture for the complete framework.

Fix: move all navigation elements, hub links, and contextual internal links to server-rendered HTML. Reserve client-side rendering for interactive UI elements that carry no SEO link graph function. The Core Web Vitals rendering architecture guidelines and SEO rendering requirements converge on the same solution: server-side delivery of all content and link structures that Googlebot must process at crawl time.

Internal Link Topology Audit — Detection and Measurement Model

A systematic internal link topology audit provides the quantitative baseline for all remediation prioritization. Without measurement, link architecture decisions are directional guesses. The audit produces four primary outputs: depth distribution map, authority flow model, anchor text distribution report, and orphan population inventory.

Audit Execution Protocol

Run a full site crawl using Screaming Frog or Sitebulb configured to follow internal links and extract all anchor text, source URL, destination URL, and link position data. Export the internal link graph as a flat file for analysis.

Depth distribution: calculate the shortest path length from the homepage to every crawled URL. Build a frequency histogram across depth buckets 1–7+. Any significant population of canonical pages at depth five or deeper is a remediation priority.

Authority flow: for each URL, calculate inbound and outbound internal link counts. Identify pages with high outbound and low inbound ratios — potential authority sinks. Cross-reference against GSC organic performance to identify pages where authority flow investment would produce measurable ranking return.

Orphan population: compare crawled URL list against sitemap URL list. URLs in sitemap but not reachable through crawl from the homepage are orphan candidates requiring verification and link injection.

Run the complete internal link audit as part of every quarterly technical SEO audit cycle. Between audit cycles, use post-deployment crawl sampling to verify that template updates have not broken internal link paths across high-priority page types.

Deployment Governance for Internal Link Stability

Internal link architecture degrades through deployments. Navigation template changes silently remove hub links. CMS plugin updates modify breadcrumb output. Content management system migrations break existing contextual link paths. Without governance built into the deployment pipeline, link architecture achievements erode within weeks of implementation.

Pre-Deployment Internal Link Checks

Before any deployment affecting navigation templates, CMS link generation logic, or URL structures, run the following validation steps:

Internal link graph sample crawl: crawl a representative set of 100–200 URLs from each affected template type. Verify inbound and outbound link counts match pre-deployment baseline. Any template where link counts drop significantly relative to baseline indicates a deployment regression requiring resolution before release.

Depth validation for priority pages: fetch the shortest path calculation for the top 100 commercial pages. Confirm no page has increased in depth relative to the pre-deployment baseline. Depth increases in priority pages are a deployment hold condition.

Anchor text verification: for the top 50 inbound anchor text instances on cluster hub and commercial target pages, confirm anchors are present and unchanged in the post-deployment crawl. Anchor text modifications introduced by template changes affect topical signal without any visible content change.

Integrate these checks into the deployment governance model defined in the technical SEO checklist. Link architecture stability is a blocking deployment condition, not a post-release audit finding. The most common link architecture regressions introduced at deployment are documented in the technical SEO implementation mistakes reference — reviewing those failure patterns before any navigation or template deployment significantly reduces regression risk.

Measuring Internal Link Architecture Effectiveness

Authority distribution improvements take four to eight weeks to produce measurable ranking signal changes. However, intermediate signals are available earlier and validate that the architectural changes are propagating correctly.

| Metric | Data Source | Measurement Timing | Healthy Signal |

|---|---|---|---|

| Crawl frequency for depth-reduced pages | Server logs — Googlebot request timestamps per URL | Week 2–3 post-implementation | Increased request frequency for pages where depth was reduced |

| Orphan population size | Sitemap vs crawl graph comparison | Monthly | Zero or decreasing orphan count across audit cycles |

| GSC “Discovered — currently not indexed” count | Google Search Console Coverage report | Week 4–6 post-implementation | Reduction in this state for pages that received link injection |

| Average click depth for commercial pages | Screaming Frog shortest-path calculation | Post-deployment crawl; monthly thereafter | Decreasing average depth for priority page populations |

| Anchor text distribution per hub URL | Internal link graph export — anchor field | Quarterly audit cycle | Increasing ratio of partial/exact match anchors vs generic anchors |

| Organic impressions for cluster hubs | GSC Performance report — query-level data | Week 6–10 post-implementation | Increasing impression share for target keyphrases on hub pages |

The crawl frequency signal from server logs provides the earliest validation — visible within two to three weeks of link injection for depth-reduced pages. GSC Coverage and organic performance changes lag by four to eight weeks as Google re-evaluates page authority and topical relevance based on the updated link graph. Both measurement layers are required for a complete effectiveness picture. For log-based measurement methodology, see our technical SEO log file analysis guide.

Frequently Asked Questions

How many internal links should a single page have?

There is no universal limit, but signal dilution is a real constraint. Each internal link on a page distributes some fraction of that page’s outbound equity. A page with 200 internal links distributes significantly less equity per link than a page with 20 internal links carrying the same total authority. In practice, keep contextual internal links in editorial content to 8–15 per page for substantive link equity transfer. Navigation links — header, footer, sidebar — are structural and counted separately; their equity contribution per link is lower due to their universal site-wide presence and non-contextual position.

Does link position on the page affect authority transfer?

Position affects both crawl priority and equity signal. Links in the main content body — particularly within the first two to three paragraphs — carry higher positional authority signal than links in footers, sidebars, or navigation menus. Google’s systems weight earlier-position links differently in the document, reflecting the document structure assumption that more prominent placement indicates higher editorial endorsement. For high-value internal link targets, place the primary contextual link within the main content body, ideally in an early paragraph where the topical connection is strongest.

How do you handle internal linking for large e-commerce catalogs with millions of product pages?

At catalog scale, manual internal linking is not operationally viable. The architecture must be template-driven: category pages automatically link to featured products and subcategories; product pages automatically link to the parent category, related products (by tag or attribute), and complementary products. The link selection logic is built into the CMS template, not managed editorially. Authority flow modeling at catalog scale uses sampled crawl data rather than full-graph analysis — sample 5–10% of the URL set across all template types and model authority distribution from that sample. The technical SEO crawl budget optimization framework applies directly here: template-level link density decisions affect both authority distribution and crawl depth simultaneously.

When should cross-cluster internal links be added versus avoided?

Add cross-cluster links when the topical adjacency is genuine and the destination cluster hub is relevant to a segment of the source page’s audience. Avoid cross-cluster links when the connection is superficial or when the anchor text required to make the link contextually relevant would be forced or off-topic. The test is whether a reader encountering the link in context would find it useful and topically connected. Structurally, cross-cluster links should connect hub-to-hub — pillar pages linking to adjacent pillar pages — rather than connecting supporting articles across clusters, which fragments topical authority signals.

How do you detect and fix internal link authority silos?

An authority silo exists when a cluster of pages accumulates strong inbound link equity but distributes none of it to adjacent valuable pages. Detection: export the internal link graph and calculate the inbound-to-outbound link ratio for each URL. Pages with high inbound ratios and low outbound ratios — particularly hub pages that do not link to commercial conversion pages — are silo candidates. Fix: add contextual outbound links from the silo hub to high-value commercial pages in the same topical domain. The anchor should be partial match, contextually integrated into the hub’s primary content rather than appended as a related links block. Measure effectiveness via crawl frequency improvement on the newly linked commercial pages over the subsequent 30-day log window. For the complete log measurement framework, see our technical SEO log file analysis guide.