Crawl budget failure is silent. Rankings degrade slowly, index coverage shrinks, and GSC data lags the root cause by weeks. By the time the signal is visible, the architecture has already been bleeding efficiency for months. The fix starts with logs — not assumptions.

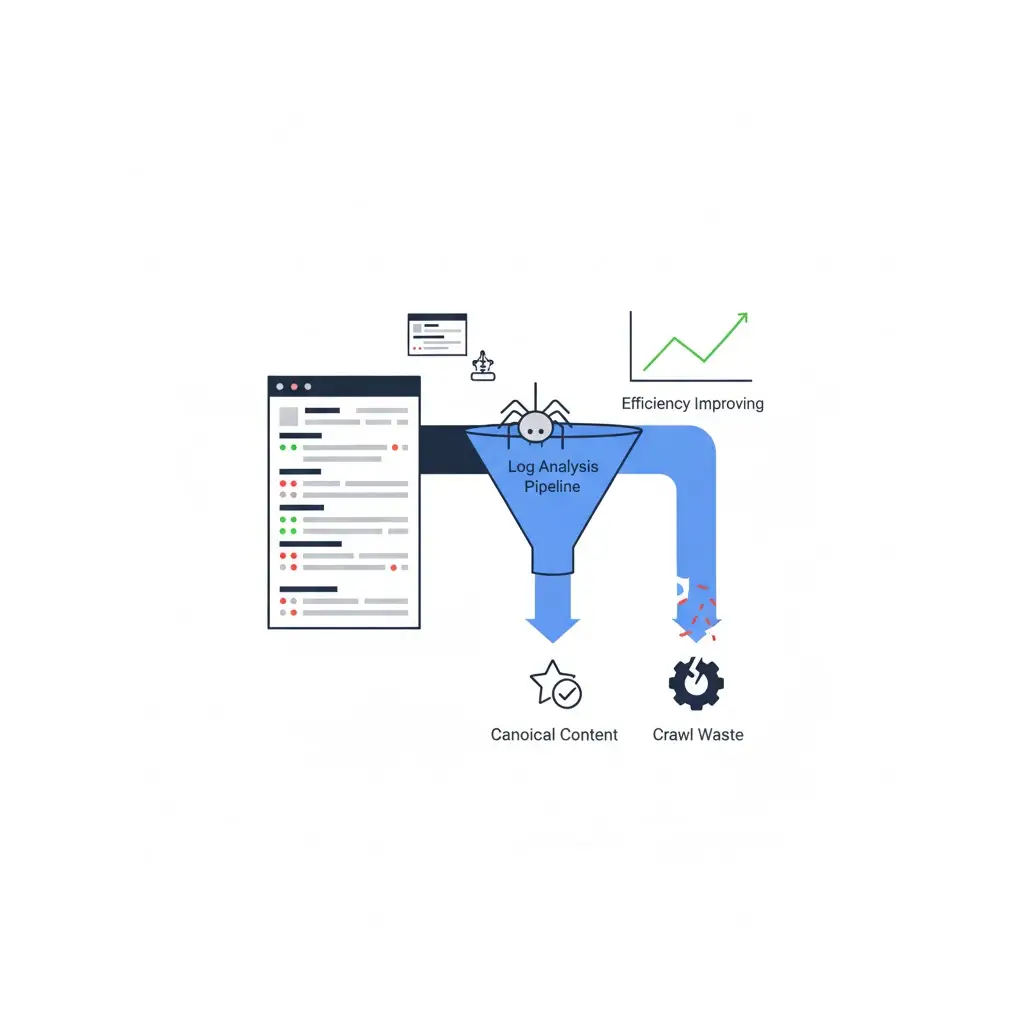

This guide builds a complete log-driven crawl efficiency system: from raw log extraction through waste classification, reallocation strategy, and 30–60 day measurement. Every section follows the same diagnostic logic that governs the broader technical SEO architecture framework — crawl budget is not a standalone setting, it is an output of architectural decisions.

What Crawl Budget Actually Means in 2026

Crawl budget is not a single number Google assigns to a domain. It is the product of two independent variables: crawl rate limit and crawl demand. Crawl rate limit is how fast Googlebot crawls without overloading the server. Crawl demand is how frequently Google wants to recrawl URLs based on perceived value and link signals.

In practice, most sites do not hit their rate limit. The operational failure is on the demand side — Googlebot allocates a large proportion of available requests to URLs that return zero indexing value. Those requests displace canonical content, reduce refresh frequency on revenue-critical pages, and accumulate as structural debt.

Google’s own Search documentation defines crawl budget as relevant primarily for large sites with thousands of URLs or more. However, crawl efficiency matters at any scale where parameter proliferation, dynamic URL generation, or faceted navigation exists — and those conditions appear on sites with as few as 5,000 crawlable URLs.

The 2026 constraint is rendering latency. JavaScript-heavy architectures introduce a second budget layer: the rendering queue. A URL can be crawled quickly and still wait hours or weeks in Google’s rendering pipeline before its content is processed. Crawl budget optimization must now account for both the crawl event and the render event as separate resource bottlenecks.

Log File Analysis as the Primary Source of Truth

GSC coverage reports, crawl stats dashboards, and third-party crawlers all provide approximations. Server logs are ground truth. They record every request Googlebot made, the response code returned, the URL path, the timestamp, and the user agent string — with no sampling, no lag, and no interpretation layer.

Every crawl budget decision that is not grounded in log data is a hypothesis. Logs convert hypotheses into measurements.

Extracting and Cleaning Server Logs

Log format varies by server stack. Apache produces Combined Log Format by default. Nginx uses a similar structure. CDN-intercepted traffic — CloudFront, Cloudflare, Fastly — requires log export from the CDN layer, not the origin, to capture requests that never reach the web server.

Extraction steps for most environments:

Pull raw access logs for a minimum 30-day window. Shorter windows miss crawl cycle patterns and produce unreliable frequency distributions. For enterprise sites, 60–90 days is the recommended baseline.

Filter immediately for Googlebot user agent strings: Googlebot/2.1 and Googlebot-Image/1.0. Preserve AdsBot-Google separately — it follows different crawl rules and should not be mixed into organic crawl budget analysis.

Strip static asset requests — CSS, JS, images, fonts — unless the investigation specifically targets rendering resource crawl behavior. These inflate request counts without contributing to indexation analysis.

Output a cleaned dataset with at minimum: timestamp, URL path, HTTP status code, response size, and crawl agent.

Googlebot Verification and Segmentation

User agent strings are spoofable. Before drawing conclusions from log data, verify that requests claiming to be Googlebot are legitimate using reverse DNS lookup. The verified IP ranges resolve to googlebot.com hostnames. Any Googlebot claim that does not pass reverse DNS is bot traffic misidentifying itself — exclude it from analysis.

After verification, segment the confirmed Googlebot dataset into crawl categories:

Canonical content pages: the URLs you intend to rank. Product pages, blog posts, category pages, landing pages with indexable intent.

Non-canonical parameter variants: URLs generated by sorting, filtering, tracking parameters, or session IDs that duplicate canonical content.

Faceted navigation combinations: filter combinations with low or zero independent search demand.

Pagination: page 2 and deeper of category or archive sequences.

Soft-404s and error pages: URLs returning 200 but serving thin, absent, or error-state content.

Infrastructure URLs: sitemaps, robots.txt, feed URLs, admin paths that should not be consuming crawl allocation from content.

This segmentation is the foundation of the entire optimization strategy. Without it, reallocation decisions are directionally blind.

Crawl Frequency Distribution Modeling

Crawl frequency is not uniform. Googlebot recrawls high-value, frequently updated, well-linked pages at significantly higher rates than deep, orphaned, or low-authority content. The frequency distribution across a site’s URL population reveals exactly where crawl investment is misallocated.

Build the frequency model by grouping URLs into crawl frequency buckets: crawled daily, crawled weekly, crawled monthly, crawled once in the analysis window, never crawled in the analysis window. Map each bucket against URL type and crawl depth.

The diagnostic output should answer: what percentage of your high-priority canonical content is in the daily or weekly bucket? What percentage of your non-canonical parameter variants is in the same bucket? In a well-optimized site, canonical content should dominate high-frequency crawling. In most unoptimized sites, the inverse is true — parameter and facet variants capture disproportionate crawl frequency while canonical pages refresh slowly.

Crawl Waste Identification Framework

Crawl waste is any Googlebot request that consumes budget without contributing to indexable content or meaningful signal collection. Identifying waste precisely — by type, volume, and URL pattern — is the prerequisite for any reallocation strategy.

Parameter Crawl Waste

Parameters are the most pervasive source of crawl waste across all site types. Every unfixed parameter type that generates unique URLs creates an unbounded crawl surface that grows with site traffic and CMS updates.

The failure pattern: a site launches with 20,000 canonical product pages. Analytics parameters, A/B test parameters, and affiliate tracking IDs are appended to URLs across marketing campaigns. Within six months, the crawlable URL surface is 200,000+ — the additional 180,000 URLs are exact or near-exact duplicates of canonical content, indexed or not.

Detection requires log segmentation by URL pattern. Use regex grouping to isolate requests containing known parameter strings: ?utm_, ?ref=, ?sort=, ?filter=, ?sid=, ?page=. Calculate the total request share for each parameter family. Any parameter family consuming more than 3–5% of total Googlebot requests without producing indexable content is a remediation priority.

The fix is not universal blocking. It is parameter classification: tracking parameters canonicalize to clean URLs site-wide; sorting parameters canonicalize to base category URLs; session IDs are blocked in robots.txt; high-value commercial filter parameters are evaluated individually for indexation merit. Classification must happen at the template layer — not URL by URL.

Faceted Navigation Crawl Waste

Faceted navigation generates crawl waste at a different order of magnitude than parameter waste. A catalog with 10,000 products and 15 filterable dimensions — brand, color, size, material, price range, rating, availability — can generate tens of millions of unique URL combinations. Most carry no independent search demand.

The diagnostic starts with log data: segment all Googlebot requests matching facet URL patterns and calculate their share of total crawl allocation. On uncontrolled e-commerce sites, facet waste commonly consumes 50–80% of total crawl budget. That means canonical product pages — the URLs that rank and convert — receive a fraction of available crawl cycles.

Remediation requires a facet classification system, not a blanket block. High-demand facets — brand filters on high-traffic categories, specification filters matching commercial query patterns — should be indexed with self-referencing canonicals and sitemap inclusion. Low-demand combinations should canonicalize to the parent category. Pure UX sort filters (price ascending, most reviewed) should be canonicalized and, where volume is extreme, disallowed in robots.txt.

The complete facet governance model is part of the technical SEO architecture framework, which defines classification criteria and implementation methods at the template level.

Pagination and Soft-404 Waste

Pagination waste is site-specific. On large archives where paginated pages carry genuinely unique content — distinct product sets, distinct article lists — pagination serves a legitimate crawl purpose. The waste emerges when paginated pages are thin duplicates of the root category, or when pagination extends to depths (page 50, page 200) where content density drops to near-zero.

Log analysis reveals pagination crawl share by depth. Extract all URLs matching pagination patterns (/page/N/, ?p=N, ?start=N) and group by page number. Chart crawl frequency against page depth. The typical curve shows rapid decline — page 2 and 3 receive substantial crawl attention, pages beyond 10 receive negligible requests. Any budget allocated to pages beyond that inflection point is recoverable through canonicalization to the root or noindex directives on thin paginated tails.

Soft-404s are a separate but related problem. A soft-404 is a URL that returns HTTP 200 but serves empty, minimal, or error-state content — a discontinued product page showing “no results,” an expired event page serving a generic template, a filtered category with zero matching products. These URLs consume crawl budget, may be indexed as thin content, and communicate poor site quality signals. Detection: export all 200-response URLs from log data and cross-reference against crawl content audits to identify pages with near-zero content. Run the technical SEO audit to systematically flag soft-404 patterns across template types.

Orphan Crawl Traps

An orphan crawl trap is a URL that Googlebot discovers and recrawls repeatedly despite having no internal link pointing to it, no sitemap inclusion, and no indexation value. These typically originate from legacy URL patterns, deleted content with no 410 response, or dynamically generated URLs from JavaScript interactions that were never canonicalized.

Orphan traps persist in Googlebot’s crawl queue because they were crawled previously and remain in Google’s internal URL database. Without a 410 Gone response or a disallow directive, Google continues to allocate crawl requests to these dead endpoints indefinitely.

Detection: export the full crawled URL list from logs. Cross-reference against your current sitemap, internal link graph, and redirected URL inventory. URLs present in crawl logs that appear in none of these sources are orphan candidates. Confirm by fetching each URL programmatically and verifying response content. Remediation: return 410 for permanently removed content, 301 for relocated content, and robots.txt disallow for URL patterns that should never have been crawled.

Crawl Share Segmentation Model

The crawl share model translates log analysis output into a prioritized action matrix. It provides a single-view summary of how Googlebot is currently spending crawl budget across URL types, and what the target allocation should be post-optimization.

Run this model monthly during active optimization and quarterly once baseline efficiency is achieved. The values below are illustrative of a typical unoptimized e-commerce site — actual distributions will vary by site type and architecture.

| URL Type | % of Googlebot Hits | Indexable? | Action Required |

|---|---|---|---|

| Canonical product / category pages | 18% | Yes | Increase crawl share; improve internal link depth |

| Faceted navigation combinations | 41% | No (most) | Classify by demand; canonicalize or block non-indexable facets |

| Tracking / analytics parameters | 14% | No | Canonical to clean URL site-wide; block high-volume patterns |

| Pagination (deep, thin tail) | 9% | Partial | Noindex or canonical thin pages beyond depth threshold |

| Soft-404 / empty result pages | 7% | No | Return 404/410 or redirect to parent; remove from crawl surface |

| Orphan legacy URLs | 5% | No | Return 410 or disallow in robots.txt |

| Infrastructure (sitemaps, feeds, robots) | 4% | N/A | Expected; monitor for excessive recrawl frequency |

| Canonical blog / content pages | 2% | Yes | Significantly underserved; requires depth flattening and link reinforcement |

A healthy crawl share model targets 60–70% of Googlebot requests landing on indexable canonical content. Sites below 30% on this metric have significant architectural waste to address before any content or link investment will perform at full efficiency.

Crawl Budget Reallocation Strategy

Reallocation is not a single action — it is a sequenced set of interventions applied in order of impact and reversibility. Start with the highest-waste, lowest-risk fixes and build toward deeper structural changes.

Robots.txt vs Canonical vs Noindex

Three directive mechanisms exist for removing URLs from effective crawl allocation. Each has a distinct crawl budget impact and a distinct indexation implication. Choosing the wrong mechanism is one of the most common technical SEO implementation mistakes in large-scale crawl optimization projects.

Robots.txt disallow prevents crawling entirely. Googlebot does not fetch the URL, does not read its canonical tag, and does not process any directives on the page. Crawl budget impact is immediate and complete. However, the URL may still be indexed if external links point to it — Google can index URLs it has never crawled based on external signal alone. Use robots.txt disallow for URL patterns that carry zero indexation value and have no external link exposure: session IDs, internal search result pages, admin paths, high-volume tracking parameter patterns.

Canonical tags allow crawling but consolidate indexation signals to the designated canonical. Crawl budget savings are partial — Googlebot still visits the page to read the canonical tag. The advantage is signal consolidation: equity from any external links pointing to the canonicalized URL flows to the canonical destination. Use canonical consolidation for parameter variants and facet URLs that may receive external links and should pass equity to the parent page.

Noindex allows full crawling and rendering but removes the URL from the index. Crawl budget impact is minimal — Googlebot continues visiting the page regularly to confirm its noindex status. Noindex is appropriate for URLs that must remain crawlable for other reasons (internal tool pages, staging leak prevention), but should not be the primary mechanism for crawl waste reduction at scale.

For maximum crawl budget recovery, the sequence is: robots.txt disallow for high-volume zero-value patterns first, canonical consolidation for linkable parameter variants second, noindex for edge cases last.

Internal Link Reinforcement

Crawl budget reallocation is not only about blocking waste — it is also about actively directing Googlebot toward underserved canonical content. Internal links are the primary signal Googlebot uses to determine crawl priority within a domain.

After reducing waste allocation, model the expected crawl share redistribution. If facet waste is reduced from 41% to 8%, that budget must flow somewhere. Without active internal link reinforcement, it does not automatically redistribute to canonical content — it may partially reduce overall crawl activity instead.

Target internal link interventions: ensure every canonical product and category page receives at least one contextual internal link from a high-authority hub page. Add contextual links from high-traffic content pages to underserved commercial pages. Increase link frequency to pages that appear in the low-crawl-frequency bucket in log data. Each additional qualified internal link increases the probability of more frequent Googlebot visits to the linked page.

Anchor text specificity matters for signal quality. Generic anchors (“learn more,” “see here”) do not communicate topical relevance. Every internal link should use an anchor that names the destination’s primary concept or keyword cluster.

Sitemap Prioritization

XML sitemaps influence crawl demand — not crawl rate. A URL in the sitemap signals to Google that it is considered important enough to warrant inclusion; a URL absent from the sitemap receives no such signal. Sitemap hygiene directly affects which URLs receive crawl attention and at what frequency.

The operational requirement: sitemaps must include only indexable, canonical URLs returning 200 status codes. Non-canonical URLs, redirected URLs, noindexed URLs, and soft-404s in the sitemap create conflicting signals — Google expects indexable content at sitemap-listed URLs and may deprioritize the sitemap as a source when it repeatedly encounters non-indexable responses.

At enterprise scale, segmented sitemaps improve crawl targeting: separate sitemap files for product pages, category pages, blog content, and newly published URLs. Freshness-prioritized sitemaps — containing only URLs updated in the last 30 days — can accelerate crawl refresh of recently modified content. Submit these to GSC Search Console and monitor the “Discovered — currently not indexed” GSC report for sitemap URLs that are not being crawled despite inclusion.

Depth Flattening Without URL Changes

Click depth is the most structurally significant crawl frequency determinant. Pages at depth 2 receive dramatically more frequent crawl attention than pages at depth 5. Reducing click depth for high-priority content does not require URL restructuring — it requires internal link topology changes.

The mechanism: identify canonical pages currently sitting at click depth 4 or deeper. Add contextual links to those pages from hub pages at depth 1 or 2. A single link from a depth-2 hub page reduces the target page’s effective depth to 3. Multiple links from depth-1 and depth-2 pages create multiple short crawl paths, increasing effective crawl priority.

Depth flattening at scale requires a link topology map. Build it by exporting internal link data from Screaming Frog or Sitebulb, calculating the shortest path from the homepage to every canonical URL, and identifying pages where the shortest path exceeds 3 clicks. Prioritize depth reduction for pages with high commercial value or recent content updates that require faster crawl refresh.

Measuring Improvement Over 30–60 Days

Crawl budget optimization does not produce overnight results. Google’s re-evaluation of crawl allocation following architectural changes typically requires 30–60 days of sustained signal before the new pattern is reflected in log data. Measuring within the first two weeks produces noise, not signal.

The measurement framework operates across three data sources simultaneously.

Log data comparison: pull the same 30-day crawl analysis that established the baseline. Compare crawl share by URL type against the pre-optimization baseline. The target metrics: canonical content crawl share should increase; waste category shares should decrease proportionally. Any category where share remains unchanged despite directive implementation indicates the fix was not applied correctly at the template level.

GSC Index Coverage delta: monitor the “Excluded” category weekly. Specifically track “Duplicate without user-selected canonical,” “Crawled — currently not indexed,” and “Discovered — currently not indexed.” Successful canonical consolidation produces measurable reduction in the duplicate exclusion count. Successful depth flattening produces reduction in “Discovered — currently not indexed” for previously buried content.

Crawl stats in GSC: the crawl stats report shows daily Googlebot activity — total requests, average response time, and total response size. Post-optimization, the total request count may initially decrease as waste categories are blocked. Simultaneously, the response time distribution should improve as server resources are freed from serving parameter variants. A healthy outcome: fewer total requests, higher proportion landing on canonical content, stable or improved server response times.

Re-run the technical SEO checklist at the 30-day mark to confirm all directive implementations remain intact. Template updates, CMS plugin changes, and CDN configuration changes between the implementation date and the measurement window can silently reverse fixes.

Enterprise-Level Considerations

Crawl budget optimization on sites with millions of URLs, multi-CDN delivery infrastructure, and JavaScript-heavy front ends operates under different constraints than standard implementations. Each dimension introduces its own failure mode.

Multi-Million URL Sites

At the scale of 10M+ URLs, the primary challenge is not identifying waste categories — those are predictable. The challenge is implementation velocity. Template-level directive changes that take a developer two hours to deploy on a 50,000-URL site may require coordination across eight engineering teams, a QA cycle, and a staged rollout process on a 10M-URL platform. During that delay, crawl waste continues.

Prioritization at scale requires a crawl waste impact model: rank waste categories by request volume multiplied by ease of implementation. High-volume tracking parameter waste that can be fixed via a single CDN redirect rule should precede complex facet canonicalization requiring CMS template changes. Quick wins that recover 5–10% of crawl budget create measurable log signal that justifies further engineering investment in harder fixes.

Structured crawl budget governance — regular log review cycles, defined ownership of crawl efficiency metrics, and integration with the deployment pipeline — is the sustainable mechanism. One-time fixes erode without governance. The technical SEO audit framework should include a crawl budget module run on a quarterly cadence at minimum, with monthly log pulls for active monitoring.

CDN and Server Response Time

Crawl rate limit — the second component of crawl budget — is sensitive to server response time. Slow server responses cause Googlebot to back off its crawl rate to avoid overloading the origin. This means that server performance directly affects how much crawl budget Google is willing to spend on a domain, independent of the waste reduction work on the content side.

CDN caching is the most direct lever. URLs served from CDN cache return sub-100ms response times that Googlebot interprets as a healthy server signal, supporting higher crawl rate limits. Ensure canonical content pages are properly cached at the CDN layer. Parameter variants that cannot be blocked should still be cached where possible — their presence in the crawl surface is damaging enough without also degrading server response signal.

Monitor average Googlebot response times in the GSC crawl stats report. Response times consistently above 500ms are a crawl rate suppression risk. Identify the slowest-responding URL patterns in log data — these are typically uncached dynamic pages or database-heavy category queries — and prioritize them for CDN caching or backend optimization.

Rendering Queue and JavaScript Interaction

JavaScript rendering introduces a second budget layer that operates independently of the primary crawl budget. A URL can be crawled immediately but sit in Google’s rendering queue for days or weeks before its JavaScript-rendered content is processed. During that window, any internal links, structured data, or canonical tags injected via JavaScript are invisible to the indexing pipeline.

The architectural rule is absolute: all SEO-critical directives — canonicals, meta robots, hreflang, Schema.org structured data — must be present in the server-side HTML response. Directives applied post-hydration are unreliable for large-scale crawl budget scenarios where rendering queue latency is a real operational constraint.

Rendering queue impact on Core Web Vitals is a secondary but real consideration. Pages with poor LCP or CLS scores — driven by JavaScript rendering patterns — may experience lower crawl priority as Google’s systems evaluate page experience signals alongside content signals. Optimizing rendering architecture for crawl efficiency and optimizing it for Core Web Vitals performance are not separate workstreams — they share the same upstream cause.

Architecture Integration Layer

Crawl budget optimization does not operate in isolation. It is one layer of a multi-dimensional technical SEO system, and its effectiveness depends on the integrity of the surrounding architecture.

The connection to technical SEO architecture is foundational. Crawl waste patterns — parameter proliferation, facet expansion, orphan accumulation — are outputs of architectural decisions: how URLs are generated, how templates apply directives, how the internal link graph is maintained. Crawl budget fixes applied on top of a broken architecture are temporary. The structural decisions that generate waste must be addressed at the design level, not patched at the URL level.

The technical SEO audit is the diagnostic mechanism that surfaces crawl waste before it reaches critical levels. Canonical chain failures, orphan populations, soft-404 accumulations, and directive inconsistencies — all of which damage crawl efficiency — are standard audit findings. Running quarterly audits with a crawl budget module integrated into the audit scope is the operational requirement for maintaining efficiency over time.

The technical SEO checklist provides the governance layer. Every deployment should pass crawl-budget-relevant checks: canonical output validation, meta robots consistency, sitemap URL integrity, and robots.txt accuracy. The checklist converts crawl budget best practices into blocking release conditions rather than post-deployment remediation tasks.

Common failures that degrade crawl efficiency post-optimization are documented in the technical SEO implementation mistakes reference. Template-level canonical errors, staging environment index leaks, A/B testing parameter proliferation, and CDN misconfiguration are the four most frequent post-deployment crawl budget regressions. Reviewing these failure patterns before and after major deployments is a standard risk mitigation step.

Frequently Asked Questions

How do I know if crawl budget is actually a problem for my site?

Pull 30 days of server logs and segment Googlebot requests by URL type. If non-canonical content — parameters, facets, pagination, soft-404s — accounts for more than 40% of Googlebot hits while your canonical pages sit at under 30%, crawl budget is a measurable problem. GSC crawl stats showing high request volume combined with a large “Discovered — currently not indexed” population is a secondary indicator. Log data is definitive; GSC data is directional.

Does robots.txt disallow actually save crawl budget, or does Google still count those URLs?

Robots.txt disallow prevents the fetch — Googlebot does not request the URL, which means no server resources are consumed and no crawl allocation is spent on that request. However, Google can still discover and theoretically index disallowed URLs through external links. The crawl budget saving is real and immediate. The indexation risk for externally linked disallowed URLs is the trade-off, which is why tracking parameters and session IDs — which carry no external link exposure — are ideal robots.txt candidates while commercially linked facet pages are better served by canonical consolidation.

How long does it take for crawl budget improvements to appear in GSC?

Directive changes typically propagate within two to four weeks for pages Googlebot has already crawled recently. The GSC crawl stats report reflects activity with a one-to-two day lag. Index Coverage changes — reduction in “Excluded” counts, movement of URLs from “Discovered” to “Indexed” — typically require four to eight weeks before the full impact is visible. Log data shows the crawl allocation shift faster than GSC, often within two to three weeks of implementation.

What is the difference between crawl budget optimization and crawl rate optimization?

Crawl rate determines how fast Googlebot crawls a site — it is constrained by server capacity and can be manually capped in GSC (not recommended unless server overload is an active problem). Crawl budget optimization addresses the demand side: which URLs receive crawl allocation, how that allocation is distributed across URL types, and how efficiently each crawl request contributes to indexation. Crawl rate optimization is a server performance problem. Crawl budget optimization is an architectural efficiency problem. Most sites benefit from addressing the demand side, not capping the rate.

Should I submit non-canonical URLs in my sitemap to get them crawled for canonical consolidation signals?

No. Sitemaps should contain only canonical, indexable URLs returning 200 status codes. Including non-canonical URLs in the sitemap creates conflicting signals — the sitemap implies indexation intent while the canonical tag on the page denies it. Google does resolve this conflict in most cases, but it reduces sitemap authority as a crawl demand signal over time. Non-canonical URLs that need their canonical tag processed will be crawled organically once Googlebot discovers them through internal links or external references.

At what URL scale does crawl budget become a critical optimization priority?

Google specifies crawl budget as a concern for sites with “a few thousand URLs or more.” In practice, the threshold is lower when parameter proliferation exists. A site with 5,000 canonical pages generating 100,000 parameter variants faces the same crawl dilution as a million-URL enterprise site with proportional waste. The better trigger is waste ratio — when non-canonical requests exceed 40% of Googlebot hits in log data, crawl budget optimization is a priority regardless of absolute URL count. Sites with fewer than 1,000 canonical pages and clean URL architecture typically do not face meaningful crawl budget constraints.