Most indexation failures are not content failures. They are architectural failures — templates emitting wrong directives, canonical chains unresolved at scale, parameter variants silently occupying index slots meant for revenue pages. Technical SEO indexation control is the discipline of designing, implementing, and continuously validating which URLs enter Google’s index, which consolidate to canonical versions, and which are permanently excluded. This guide builds that system from the ground up: detection methodology, decision frameworks, template-level protocols, and drift monitoring.

For the foundational URL generation and crawl path logic that upstream indexation decisions depend on, the technical SEO architecture framework defines the structural model this guide extends.

What Indexation Control Actually Governs

Indexation control is not about adding noindex tags reactively. It is a system design that determines — at the template level, before URLs are ever generated — exactly which URL states are indexable, which are consolidated, and which are blocked from the crawl graph entirely.

Google’s Search documentation distinguishes between three independent pipeline decisions: discovery (can Googlebot find the URL?), crawling (will Googlebot fetch it?), and indexation (will Google include it in the index?). Each stage has distinct controls. Conflating them — blocking crawl when consolidation is needed, applying noindex when a canonical would preserve equity — is the primary source of large-scale indexation errors.

At enterprise scale, the magnitude of the problem compounds. A site with 500,000 canonical pages commonly exposes 5–10 million crawlable URL variants through parameter combinations, facets, and dynamically generated paths. Without a systematic indexation control architecture, each of those variants becomes an indexation ambiguity that Google resolves independently — and not always in alignment with business intent.

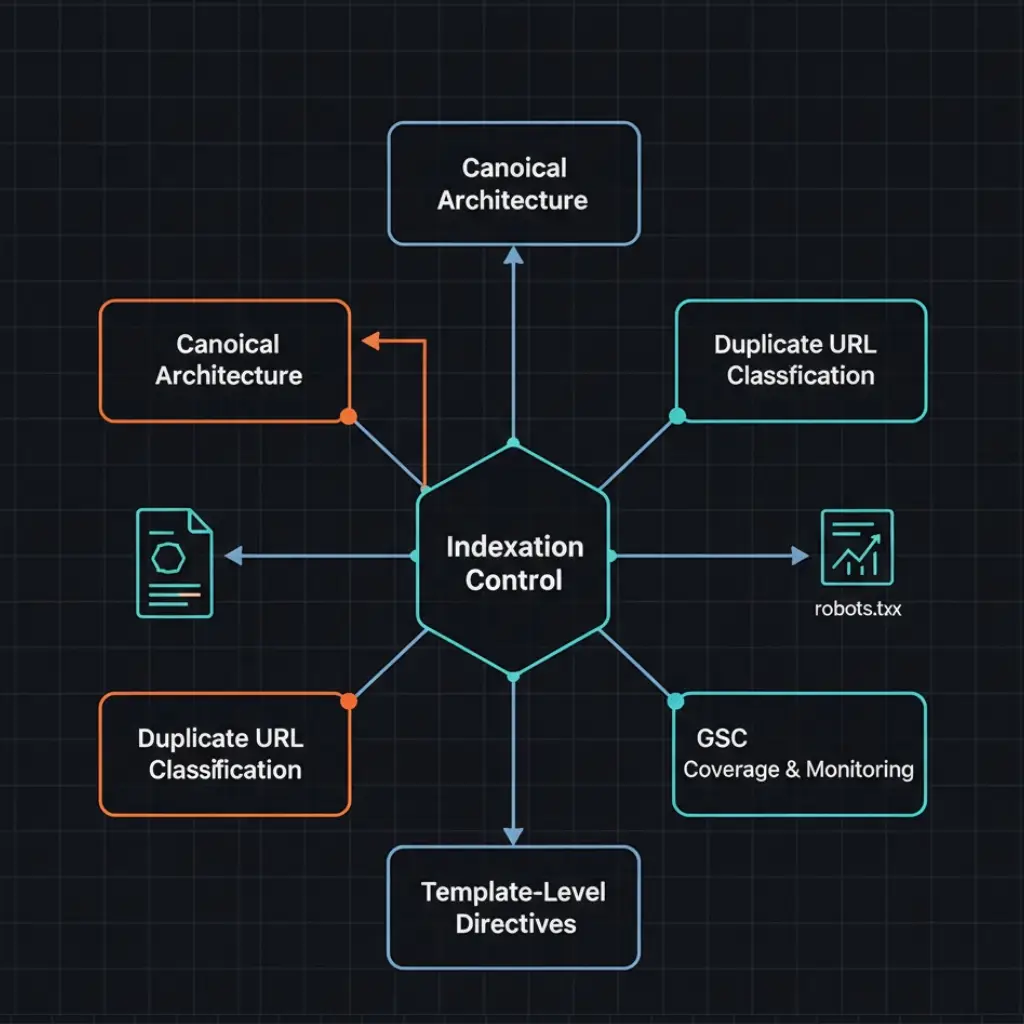

Technical SEO Indexation Control Framework at Scale

At the system level, technical SEO indexation control is not a collection of per-page fixes — it is an architectural discipline that governs how directives are generated, validated, monitored, and enforced across every URL-producing template in the site. The distinction matters operationally: a page-level fix affects one URL; a template-level fix affects every URL that template produces, now and in every future deployment.

Operationally, the framework has three enforcement layers. The first is pre-deployment — the template directive map defines canonical strategy, meta robots directive, sitemap inclusion, and structured data type for every URL-generating template before any code ships. The second is runtime monitoring — monthly crawl comparisons, GSC Coverage delta tracking, and post-deployment directive audits detect the moment any template-level directive diverges from the specification. The third is governance — every new URL pattern, parameter type, or template modification passes through an SEO review gate before reaching production, with automated CI/CD checks as the enforcement mechanism.

Consequently, sites that implement this framework at all three layers achieve measurable index stability: canonical content pages maintain consistent indexation states across deployments, waste URL categories exit the index predictably following directive implementation, and index drift events are detected within 48 hours rather than discovered weeks later through ranking degradation. The framework described in this guide aligns directly with the broader technical SEO audit cadence, where indexation control validation is a standing module in every audit cycle.

Duplicate State Classification Model

Before applying any directive, every URL type must be classified within a defined duplicate state taxonomy. Applying the wrong directive to the wrong duplicate state is one of the most documented technical SEO implementation mistakes in enterprise deployments.

The five duplicate state categories:

– Exact duplicate: Same content, different URL. Cause: trailing slash inconsistency, HTTP/HTTPS variants, www/non-www, case sensitivity. Treatment: 301 redirect to canonical version. A canonical tag alone is insufficient — these should be structurally eliminated, not just consolidated.

– Near-duplicate parameter variant: Same template, same content block, parameter appended (sort, filter, tracking). Cause: URL generation without parameter governance. Treatment: canonical to clean URL; block in robots.txt if no external link exposure and crawl volume is high.

– Thin content duplicate: Same template, unique URL, minimal unique content — empty filtered categories, discontinued product pages, paginated tails beyond meaningful content. Cause: template generating URLs without content gate logic. Treatment: noindex if the URL must remain accessible; 410 if it should be permanently removed.

– Canonical consolidation target: URL with legitimate traffic or link equity that should consolidate to a primary version. Cause: legacy URL patterns, migrated content, regional variants without hreflang. Treatment: 301 redirect where possible; canonical tag where redirect is not feasible.

– Intentionally excluded URL: Admin pages, internal tooling, staging leaks, duplicate checkout flows. Cause: infrastructure URLs entering the crawl graph without exclusion design. Treatment: robots.txt disallow for zero-equity paths; noindex for paths that must remain crawlable.

This taxonomy becomes the master reference for the indexation directive system. Every URL-generating template in the CMS must map to one of these states before deployment.

Canonical Architecture Framework

Canonicalization is the primary indexation consolidation mechanism — but only when implemented correctly at the template level. A canonical tag is a hint, not a directive. Google respects canonicals when they are consistent, non-contradictory, and technically valid. Contradictions, chains, and template-level errors reduce canonical authority and cause Google to resolve canonical questions independently.

The canonical architecture has four layers:

– Self-referencing canonicals: Every indexable URL must declare itself as its own canonical. This prevents parameter variants or session ID appends from generating ambiguous canonical states for pages that have no intended duplicates.

– Consolidation canonicals: Non-canonical URLs point to their authoritative version. The canonical target must be a 200-status, indexable page. Canonicals pointing to redirected URLs, noindexed pages, or other non-canonical URLs invalidate the consolidation.

– Cross-domain canonicals: Used in content syndication to preserve equity on the originating domain. Requires rel=canonical in the HTTP header or HTML head of the syndicated copy pointing back to the source.

– Pagination handling: Paginated URLs should carry self-referencing canonicals, not canonicals pointing to page 1. Canonicalizing paginated pages to the root collapses the content of deep pages and signals thin content rather than consolidated authority.

A critical implementation constraint: canonical tags, meta robots directives, and structured data injected only via client-side JavaScript are unreliable. Googlebot crawls and renders in separate pipeline stages — a directive injected post-hydration may not be read at crawl time at all. All canonical declarations must be present in the raw server-side HTML response, verifiable via curl before any JavaScript executes.

Detection requires crawling the full URL set and extracting canonical output per URL. Any URL where the declared canonical differs from the expected canonical per the template specification is a failure state. Run the detection protocol as part of every technical SEO audit cycle.

Canonical Chain Elimination Protocol

A canonical chain exists when URL A canonicalizes to URL B, and URL B canonicalizes to URL C. Google processes one hop. Chains longer than one hop leave the final canonical authority unresolved — Google may index any URL in the chain, or none of them.

Problem: Canonical chains are invisible at the URL level and devastating at scale. A single template error generating a chain affects every URL produced by that template simultaneously.

Cause: Chains emerge from compounding template mistakes. Common patterns: a parameter URL canonicalizes to a clean URL that was later 301-redirected; a paginated page canonicalizes to the next page rather than to itself; a locale variant canonicalizes to a regional hub that itself carries a consolidation canonical to the global root.

Detection: Crawl with Screaming Frog and export the canonical chain report. Filter for chain length >1. Any result is a failure state. Cross-reference with server logs to determine how frequently Googlebot is hitting chained URLs — high-frequency chained URLs represent the highest-priority remediation targets.

Fix: Resolve chains at the template level by updating the intermediate URL’s canonical to point directly to the final target. Do not patch individual URLs — any template-level chain will regenerate as new URLs are created. After deployment, re-crawl and re-export the chain report to confirm chain length returns to 1 across all affected URL patterns. This verification step is non-negotiable before closing the remediation ticket.

Noindex vs Canonical Decision Framework

Noindex and canonical are not interchangeable. Choosing between them requires evaluating three independent variables: link equity exposure, crawl budget allocation, and content consolidation intent.

| Scenario | External Links? | Crawl Budget Impact | Recommended Directive | Reasoning |

|---|---|---|---|---|

| Tracking parameter variant | No | High waste | Canonical + robots.txt disallow | No equity to preserve; maximum crawl budget recovery |

| Faceted filter with commercial value | Possible | Medium waste | Canonical to parent category | Preserves any inbound equity; consolidates to relevant parent |

| Thin paginated tail (page 20+) | No | Low waste | Noindex | Keeps URL accessible; removes from index; crawl cost manageable |

| Discontinued product (no replacement) | Possible | Low | 410 or 404 | Signals permanent removal; eliminates crawl allocation over time |

| Duplicate checkout / cart URL | No | Low | Noindex + robots.txt disallow | Must not be indexed; crawl exposure unnecessary |

| Locale variant with hreflang | Yes | Medium | Self-referencing canonical + hreflang | Each locale must be indexable; canonical must not point cross-locale |

| Staging environment URL in production | No | Low | Robots.txt disallow (staging) + noindex | Double protection; staging must never enter production index |

The decision tree operates in sequence: first evaluate whether the URL should ever be indexed. If yes, assign a self-referencing canonical and ensure it is indexable. If no, determine whether external link equity exists. If equity exists, assign a consolidation canonical. If no equity exists and crawl volume is high, use robots.txt disallow. If no equity exists and crawl volume is low, noindex is sufficient.

Parameter Canonical Governance

Parameter governance is the most operationally intensive component of indexation control on large sites. Without a centralized governance model, parameter handling decisions accumulate inconsistently across teams and deployments.

Problem: Unclassified parameters generate duplicate URL variants at combinatorial scale. A site with 30 active parameter types — analytics, A/B testing, sorting, filtering, affiliate tracking — can generate millions of crawlable variants of a 50,000-page canonical set.

Cause: Each team that introduces a new parameter — marketing for campaign tracking, engineering for A/B tests, product for feature flags — operates without a shared canonical governance layer. Parameters accumulate in the URL space without triggering SEO review.

Detection: Extract all unique parameter strings appearing in server logs over a 60-day window. Group by parameter family. Calculate total Googlebot request share per family. Any parameter family consuming >3% of total crawl requests is a governance target. Cross-reference against the technical SEO crawl budget optimization log segmentation model for complete crawl waste attribution.

Fix: Build a parameter registry. Every parameter introduced to the site must be registered with a canonical treatment decision before deployment — canonical to clean URL, canonical to parent, blocked in robots.txt, or session-scoped and stripped at the CDN layer. The parameter registry is a living document owned jointly by SEO and engineering. New parameter launches without registry entry are a deployment blocker defined in the technical SEO checklist governance layer.

Soft-404 Index Control

Soft-404s are 200-status URLs serving content that signals no meaningful existence — empty search results, discontinued product pages with placeholder templates, filtered categories with zero matching items, expired events with generic fallback content.

Problem: Soft-404s contaminate the index with thin, low-quality pages that consume index slots, dilute domain quality signals, and generate “Crawled — currently not indexed” GSC noise that masks real indexation failures.

Cause: Template systems generate URLs without content gate logic. A product page template renders for any product ID whether the product exists or not. A filtered category template renders for any filter combination whether results exist or not. The HTTP response is 200 regardless of content state.

Detection: Export all 200-status URLs from server logs. Crawl the exported URL set and flag pages where the content word count falls below a defined threshold (commonly 200–300 words for transactional pages) or where a predefined “no results” DOM element is present. Cross-reference against GSC Coverage’s “Crawled — currently not indexed” bucket — a high volume in that state correlates strongly with soft-404 concentration.

Fix operates at two levels. Template-level: implement content gate logic that returns 404 when no meaningful content exists for a given URL. For product pages, gate on product existence in the database. For filtered categories, gate on result count — zero results returns 404, not a no-results template. URL-level for legacy soft-404s: audit existing URLs in the “Crawled — currently not indexed” state, confirm soft-404 status, and return 410 for permanently empty URLs or redirect to the nearest relevant parent for discontinued content.

GSC Coverage Debugging System

Google Search Console’s Index Coverage report is the primary indexation monitoring surface. However, the report requires systematic interpretation — its categories are definitional, not prescriptive, and many sites misread the states.

The GSC Coverage debugging system operates per state:

– Submitted and indexed: Healthy state. Monitor for unexpected exclusion — pages moving out of this state without a corresponding directive change signal a crawl or rendering failure.

– Indexed, not submitted in sitemap: Acceptable for internal links not included in sitemaps. Problematic if canonical content pages are missing from sitemaps — review sitemap hygiene per the technical SEO checklist.

– Duplicate without user-selected canonical: Google found duplicate content and selected its own canonical, which may differ from yours. Cause: inconsistent canonical implementation or canonical tags absent on duplicate variants. Fix: enforce self-referencing canonicals on all canonical pages; enforce consolidation canonicals on all duplicate variants.

– Duplicate, Google chose different canonical than user: Your declared canonical conflicts with Google’s chosen canonical. Cause: canonical tag present but contradicted by other signals — internal links, inbound equity, or content similarity weighting. Fix: audit why Google’s chosen canonical differs; reinforce the correct version through internal links and sitemap inclusion.

– Crawled — currently not indexed: URL was crawled but not indexed. Cause varies: thin content, soft-404, canonical chain, rendering failure, or quality signal below threshold. Fix: segment by URL type to identify the dominant cause; apply the corresponding fix from the duplicate state classification model.

– Discovered — currently not indexed: URL is known to Google but not yet crawled or indexed. Cause: insufficient crawl budget, low crawl priority, deep click depth. Fix: flatten click depth, increase internal link frequency to affected pages, verify sitemap inclusion.

– Excluded by noindex: Expected for intentionally excluded URLs. Unexpected noindex exclusions signal a template-level directive error — run template directive validation immediately.

Monitor each state weekly during active optimization. Any unexpected movement — canonical pages appearing in exclusion states, noindex pages appearing in indexed states — triggers immediate template directive audit.

Template-Level Indexation Mapping

Every URL-generating template is an indexation declaration. A template that generates 50,000 URLs applies a single set of directives to all 50,000 pages simultaneously. Template-level errors are, therefore, 50,000-URL indexation errors.

The template directive map is a pre-deployment document that specifies, for every URL-generating template in the CMS: canonical strategy (self-referencing, parent consolidation, or cross-domain), meta robots directive (index/follow, noindex/follow, noindex/nofollow), sitemap inclusion rule, hreflang configuration if applicable, and structured data type.

| Template Type | Canonical Strategy | Meta Robots | Sitemap | Structured Data |

|---|---|---|---|---|

| Product page | Self-referencing | index, follow | Yes | Product schema |

| Category page | Self-referencing | index, follow | Yes | BreadcrumbList |

| Faceted filter (high-value) | Self-referencing | index, follow | Yes (selective) | BreadcrumbList |

| Faceted filter (low-value) | Points to parent category | noindex, follow | No | None |

| Sorting parameter variant | Points to clean URL | noindex, follow | No | None |

| Paginated archive (page 2+) | Self-referencing | index, follow | No | None |

| Internal search results | Points to site root | noindex, follow | No | None |

| Author archive | Self-referencing (if indexed) | index, follow or noindex | Conditional | Person schema (if indexed) |

| Tag archive | Points to primary category | noindex, follow | No | None |

| Checkout / cart | N/A | noindex, nofollow | No | None |

The template directive map becomes the SEO source of truth against which all deployments are validated. Any template change that modifies canonical output, meta robots, or sitemap inclusion requires an SEO review sign-off before deployment. Without this gate, a single CMS update can silently rewrite directives across the entire URL population.

Index Drift Monitoring System

Index drift is the gradual erosion of correct indexation states over time, caused by CMS updates, plugin installations, theme changes, or developer interventions that modify template-level directive output without triggering SEO review.

Index drift is insidious precisely because it is incremental. A plugin update that removes the canonical tag from 200 URLs does not produce an immediate ranking signal — it produces a slow degradation over 4–8 weeks as Google re-evaluates canonical authority for affected pages and may select alternative canonicals.

The monitoring system requires four operational components:

– Monthly crawl comparison: Run a full site crawl on a defined schedule. Export canonical output, meta robots, and HTTP status per URL. Compare against the previous month’s export. Any URL where canonical output or robots directive has changed without a corresponding deployment record is a drift event.

– GSC Coverage weekly delta: Track weekly movement in each Coverage state. Unexpected increases in “Excluded” states — particularly “Duplicate without user-selected canonical” and “Excluded by noindex” — are drift indicators.

– Deployment diff audit: After every CMS update, plugin installation, or theme change, crawl a representative sample of 200–500 URLs across all major template types. Verify canonical and robots output against the template directive map. This audit should occur within 48 hours of any deployment.

– Canonical consistency check: Periodically fetch canonical tags from the raw server-side HTML response — not from the rendered DOM — using curl. Any canonical present in the rendered DOM but absent from the raw HTML response is being injected via JavaScript and is therefore unreliable for crawl-time indexation control.

Index drift monitoring integrates directly with the technical SEO audit cadence. The audit should include a dedicated drift detection module comparing current directive output against the template directive map baseline.

Deployment Guardrails for Indexation Integrity

Deployment guardrails convert indexation control from a reactive discipline into a proactive system. Every deployment that modifies URL generation, template logic, or server configuration must pass a defined set of indexation checks before reaching production.

The blocking conditions for any deployment:

– Canonical output validation: Post-deploy crawl of a representative URL sample confirms canonical tags match the template directive map specification. Any deviation blocks deployment.

– Meta robots consistency: No previously indexable template now emits noindex without an explicit SEO decision record. Unexpected noindex on high-priority templates is an immediate rollback trigger.

– Sitemap integrity: All sitemap-listed URLs return 200 status with correct canonical self-reference. Sitemap URLs returning redirects, noindex, or canonical pointing elsewhere indicate a deployment regression.

– Structured data continuity: Run Schema.org structured data validation against critical template types post-deploy. Rendering errors or missing required fields in schema output signal a JavaScript rendering failure affecting indexation.

– Robots.txt diff: Confirm robots.txt content is unchanged unless the deployment explicitly modified it. Accidental robots.txt changes that disallow previously crawlable paths are among the highest-severity indexation incidents.

– JS directive verification: Fetch canonical and robots tags from raw server-side HTML response via curl for all modified templates. Any directive that appears only in the rendered DOM and not in the raw response is unreliable and must be moved server-side before the deployment clears.

These checks should be automated within the CI/CD pipeline and integrated with the broader deployment guardrail system defined in the technical SEO checklist. Manual review is insufficient at deployment frequency — automation is the only viable control at enterprise scale.

Core Web Vitals performance is a secondary but real consideration here. Pages with rendering failures that degrade Core Web Vitals scores may also have their canonical and structured data directives compromised if those directives are rendered client-side. The rendering architecture and the indexation control architecture are not independent systems.

Indexation Control Validation Protocol

After implementing any indexation directive change at scale, validation is not optional. The validation protocol operates across a defined timeline:

Week 1–2: Verify directive propagation via crawl. Confirm canonical output, meta robots, and robots.txt changes are active across all affected URL patterns. Log data will begin showing shifted Googlebot behavior — reduced crawl frequency on blocked URL patterns within 10–14 days.

Week 3–4: GSC Coverage delta becomes measurable. Track reduction in target exclusion states. “Duplicate without user-selected canonical” should decline as canonical consolidation propagates. “Crawled — currently not indexed” soft-404 volume should decline as 404/410 responses replace 200-status thin pages.

Week 6–8: Index composition stabilizes. Canonical content share in the index should increase. Compare indexed URL count per template type against pre-implementation baseline. Revenue-critical templates should show stable or increasing indexed URL counts; waste template types should show significant reduction.

Week 8+: Crawl budget redistribution becomes measurable in log data. The technical SEO crawl budget optimization measurement framework provides the log segmentation model for confirming that canonical content pages now receive a higher proportion of total Googlebot crawl allocation.

Throughout the validation window, maintain the deployment guardrail checks on any new deployments. A single template regression during the validation window contaminates the measurement signal and requires timeline reset.

Frequently Asked Questions

What is the difference between noindex and canonical for duplicate content control?

Noindex removes a URL from the index while allowing it to remain crawlable. Canonical consolidates indexation signals to a designated authoritative URL while leaving the duplicate URL in the crawl graph. Use canonical when the duplicate URL may receive external links that should pass equity to the target page. Use noindex when the URL must remain accessible but carries no link equity and no indexation value. Use robots.txt disallow when the URL should not be crawled at all — but note that disallowed URLs with external links may still be indexed based on those external signals alone.

How do you detect canonical chains across a large URL set?

Crawl the full domain with Screaming Frog or Sitebulb and export the canonical chain report. Filter for chain length greater than one — any result is a failure state requiring remediation. For ongoing detection, integrate canonical chain checking into the monthly crawl comparison protocol and into the post-deployment crawl audit. Canonical chains cannot be reliably detected from GSC alone; they require crawl-level inspection of canonical tag output per URL.

Why does Google override declared canonicals?

Google overrides declared canonicals when contradictory signals outweigh the canonical tag. Common override causes: internal links pointing predominantly to the non-canonical version, the non-canonical version having stronger inbound link equity, the canonical tag pointing to a noindexed or redirected page, or content between the canonical pair being insufficiently similar. Resolve overrides by reinforcing the intended canonical through internal links, ensuring the canonical target is indexable and returns 200, and increasing content differentiation if the pages are near-identical.

What causes index drift and how is it detected?

Index drift is caused by CMS plugin updates, theme changes, or developer modifications that alter template-level directive output without SEO review. Detection requires monthly crawl comparison — exporting canonical and robots directive output per URL and comparing against the previous export. Any unexplained directive change between export cycles is a drift event. GSC Coverage weekly delta tracking provides a secondary signal: unexpected increases in exclusion states indicate drift before full crawl confirmation is available.

How should soft-404s be handled differently from true 404s?

A soft-404 returns HTTP 200 but serves thin or no content — it is an indexation problem before it is a user experience problem. True 404s return HTTP 404 and are handled by Google’s standard error processing. Soft-404s require proactive identification through crawl and content analysis, then a decision: if the URL should permanently not exist, implement a 410 Gone response. If the URL should redirect to a relevant parent, implement a 301. If the template cannot be modified to return correct HTTP status codes, apply noindex as a stopgap — but this does not eliminate crawl allocation and is not a permanent solution.

How does indexation control connect to crawl budget optimization?

Indexation control and crawl budget optimization share the same upstream mechanisms — canonical tags, robots.txt, noindex directives, and template-level URL generation rules. Effective indexation control reduces the number of non-canonical URLs in the crawl graph, which directly reduces crawl waste. Conversely, poor indexation control — parameter variants without canonicals, soft-404s without 410 responses, orphan URLs without disallow rules — expands the effective crawl surface and degrades crawl efficiency. The two disciplines must be implemented and measured together, not as separate workstreams.

Slug:

technical-seo-indexation-control

Meta Title:

Technical SEO Indexation Control: Canonical & Noindex Guide

Meta Description:

Technical SEO indexation control framework for enterprise sites. Canonical architecture, noindex decisions, duplicate classification, GSC debugging, and drift monitoring.